Proposed Title:

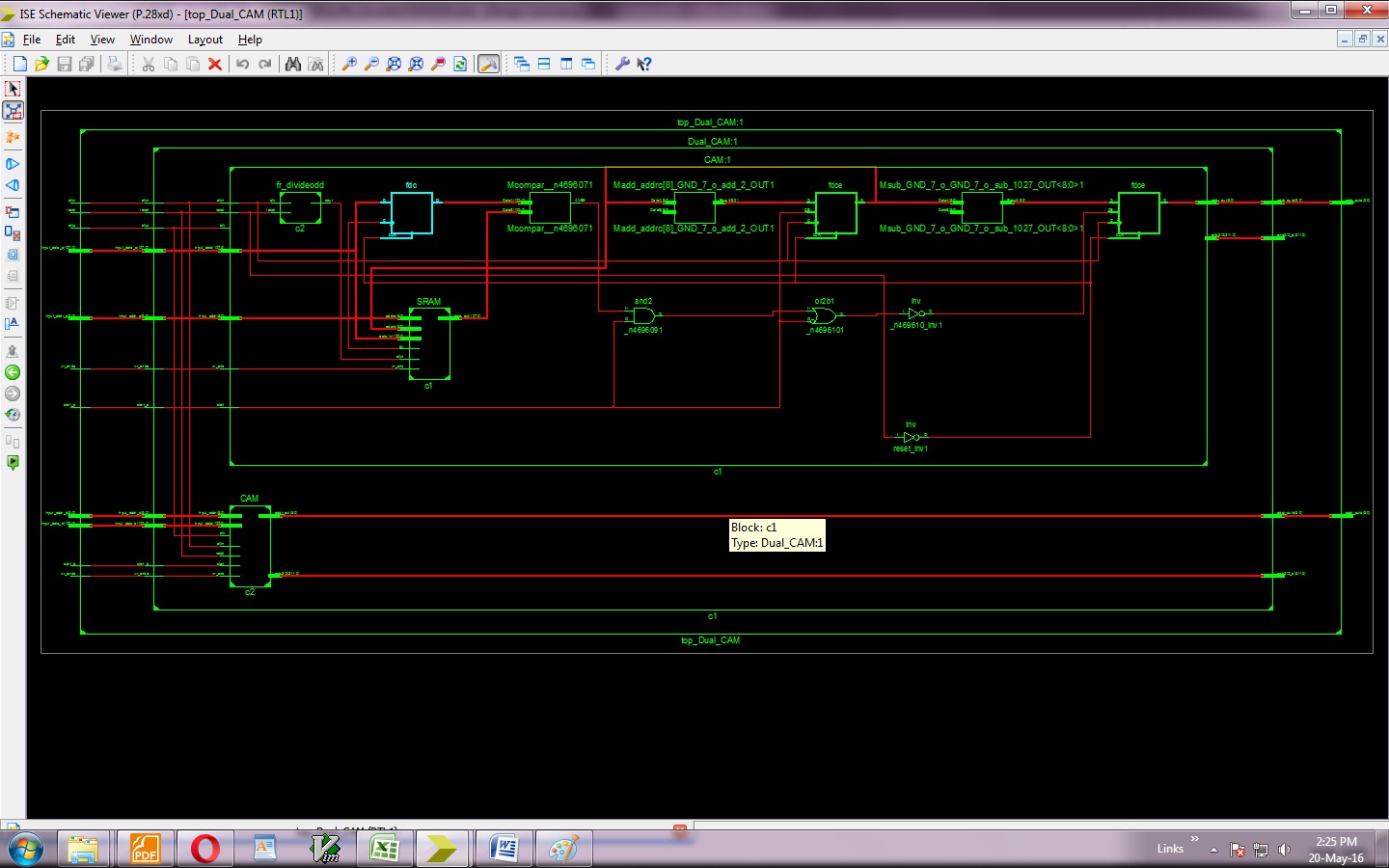

FPGA Implementation of Low Power DDR based Dual Content Addressable Memory

Proposed System:

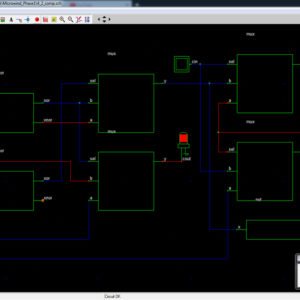

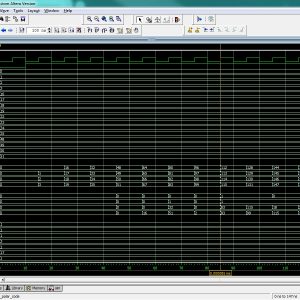

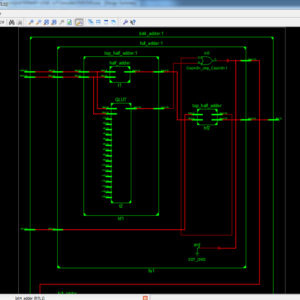

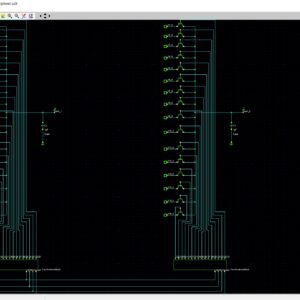

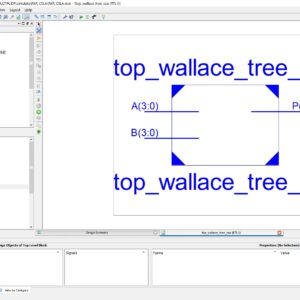

- In proposed architecture is addressable the 128bit input data in the memory by using SCN-CAM methodology.

- Re modified the architecture with support of DDR Based Dual CAM Design

Advantages:

- Increased Speed

- Less Area

- Low power consumption

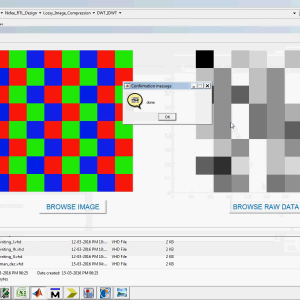

Software Implementation:

- Modelsim 6.5b

- Xilinx 14.2

Reviews

There are no reviews yet.