Proposed Title :

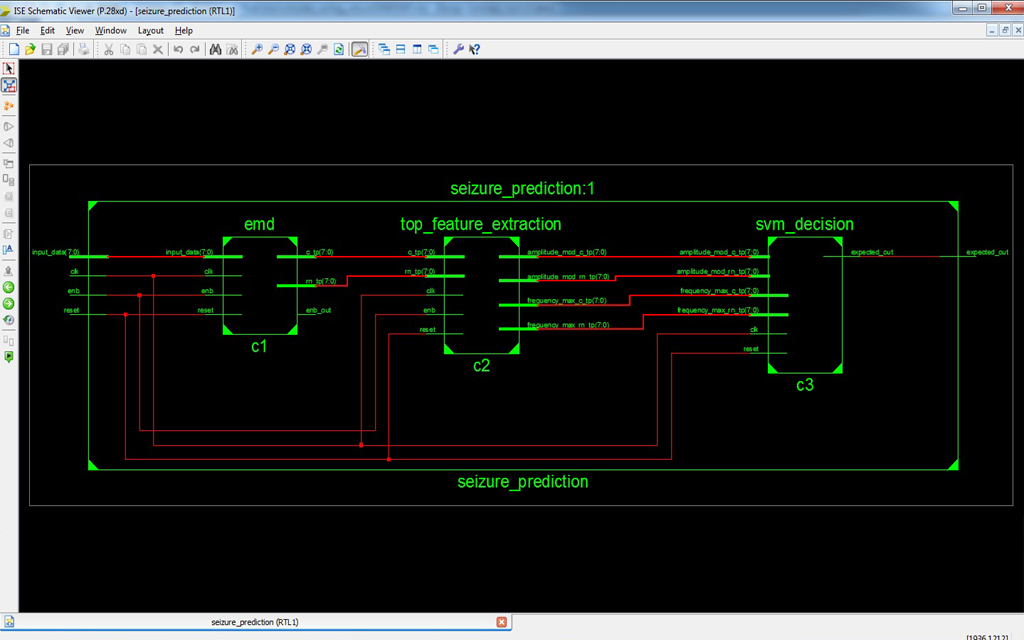

Low Power novel Empirical Mode Decomposition based Seizure Prediction using Hilbert Huang Transform

Proposed System:

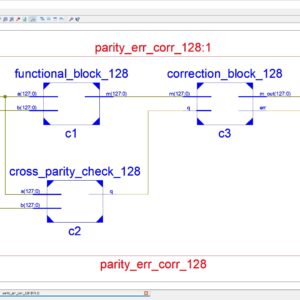

- Real-Time Empirical Mode Decomposition for the seizure Prediction

- Low Power and Area Efficient

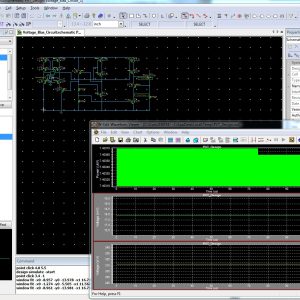

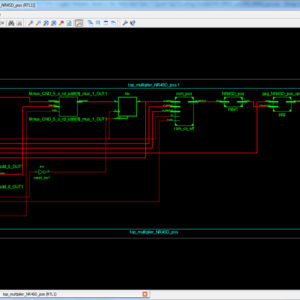

Software implementation:

- XILINX – HDL Implementation

Reviews

There are no reviews yet.