Proposed Title :

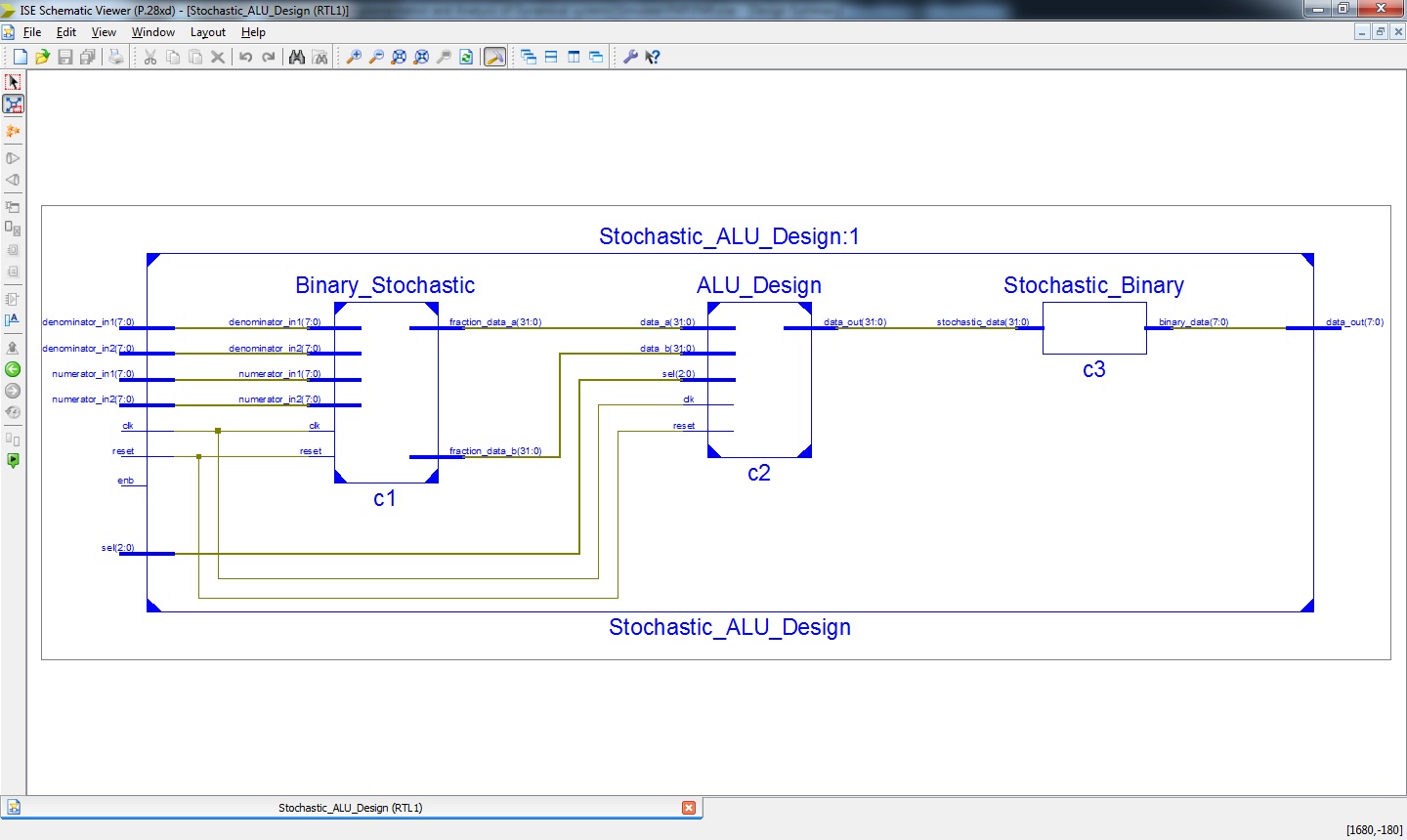

FPGA Implementation of Stochastic and Analysis of Dynamical System using Arithmetic Logic Unit – ALU Design

Improvement of this Project:

- In this Paper we are approach a new design of Stochastic and Analysis of Dynamical digital computation in ALU Design using Stochastic numbers.

Software implementation:

- Modelsim

- Xilinx 14.2

Proposed System:

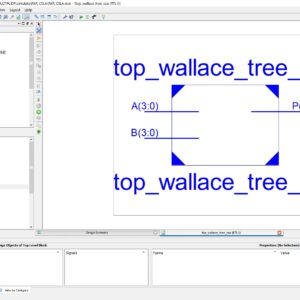

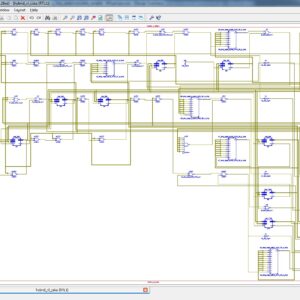

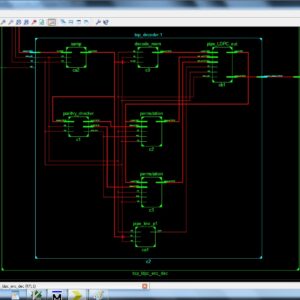

Floating point describes a system for representing numbers that would be too large or too small to be represented as integers. Floating point representation is able to retain its resolution and accuracy compared to fixed point representation limited word size, and it not used random bit streams, and complex tasks. So here we introduced the technique of Stochastic computing (SC) is a digital computation approach that operates on random bit streams to perform complex tasks with much smaller hardware, with compared to conventional binary radix approaches. It is characterized by its use of pseudo-random numbers implemented by 0-1 sequences called stochastic numbers (SN) are interpreted as probabilities. Accuracy is usually assumed to depend on the interacting SN being highly independent or uncorrelated in a loosely specified way. This paper introduced a new approach of Stochastic and Analysis of Dynamical digital computation with ALU Design. In existing comparison of Floating point ALU Design is not implemented a Stochastic approach, So here the proposed will design to implemented a Stochastic Computing in ALU Design. In top-down design approach of ALU Design, four arithmetic modules, addition, subtraction, multiplication and division are combined to form a Stochastic ALU Unit. Each module is divided into sub-module with two selection bits are combined to select a particular operation. Each module is independent to each other. This modules are realized and validated using VHDL simulation and synthesized in Xilinx 14.2, finally shown the comparison of Area, Power and Delay.

” Thanks for Visit this project Pages – Buy It Soon “

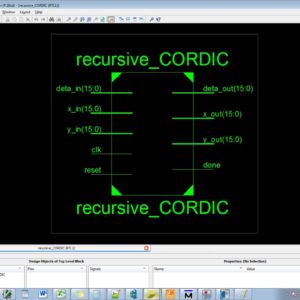

Stochastic Implementation and Analysis of Dynamical Systems Similar to the Logistic Map

“Buy VLSI Projects On On-Line”

Terms & Conditions:

- Customer are advice to watch the project video file output, before the payment to test the requirement, correction will be applicable.

- After payment, if any correction in the Project is accepted, but requirement changes is applicable with updated charges based upon the requirement.

- After payment the student having doubts, correction, software error, hardware errors, coding doubts are accepted.

- Online support will not be given more than 3 times.

- On first time explanations we can provide completely with video file support, other 2 we can provide doubt clarifications only.

- If any Issue on Software license / System Error we can support and rectify that within end of the day.

- Extra Charges For duplicate bill copy. Bill must be paid in full, No part payment will be accepted.

- After payment, to must send the payment receipt to our email id.

- Powered by NXFEE INNOVATION, Pondicherry.

Payment Method :

- Pay Add to Cart Method on this Page

- Deposit Cash/Cheque on our a/c.

- Pay Google Pay/Phone Pay : +91 9789443203

- Send Cheque through courier

- Visit our office directly

- Pay using Paypal : Click here to get NXFEE-PayPal link